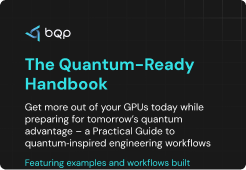

Engineering optimization is not a single method, it is a family of approaches, each designed for specific problem structures. Choosing the wrong method for the problem class is the most common reason optimization workflows underperform. A gradient-based solver applied to a multimodal design space will produce a locally good result that misses the global optimum. A metaheuristic applied to a simulation-heavy problem will require thousands of expensive CFD evaluations and become computationally prohibitive before it converges.

In aerospace, structural design, and advanced manufacturing, method selection directly affects solution quality, computation time, and whether the engineering team finds a genuinely optimal result or settles for a locally good one that leaves performance on the table.

This guide covers:

- The core families of engineering optimization methods and how each works

- Where each method breaks down and why problem class determines the right choice

- How quantum-inspired methods extend the optimization toolkit for high-dimensional, simulation-heavy engineering problems

Gradient-Based vs Metaheuristic: The Two Foundational Categories of Engineering Optimization

Every numerical engineering optimization method falls into one of two primary categories: deterministic (gradient-based) or stochastic (derivative-free / metaheuristic). Every method discussed in this guide is a variant, hybrid, or extension of one or both.

Deterministic methods use mathematical information about the objective function gradients, Hessians to move toward an optimum. They are computationally efficient and precise when that information is available and reliable. When it is not, they fail quickly.

Stochastic methods make no assumptions about the objective function's mathematical structure. They explore the search space through populations of candidate solutions, trading computational efficiency for robustness on non-convex, noisy, or black-box problems.

In practice, most real engineering problems fall in neither extreme which is why hybrid approaches and quantum-inspired methods represent the productive frontier.

Gradient-Based Optimization Methods in Engineering: How They Work and Where They Fail

Gradient-based methods are the oldest and most computationally efficient family of engineering optimization approaches. They remain dominant for well-defined, smooth, low-dimensional design problems where derivative information is available and reliable.

1. How Gradient-Based Methods Work

Gradient-based methods use derivative information about the gradient of the objective function with respect to design variables to determine the direction of steepest descent at each iteration. Established methods include gradient descent, conjugate gradient, Sequential Quadratic Programming (SQP), Newton's method, and adjoint-based approaches used in CFD and structural problems. The adjoint method is particularly valuable in aerodynamic shape optimization because it computes sensitivities at a cost nearly independent of the number of design variables.

2. Where Gradient-Based Methods Excel

These methods are efficient on smooth, convex, differentiable problems with moderate dimensionality. For aerodynamic shape refinement, adjoint CFD methods can optimize airfoil geometry across hundreds of design variables without prohibitive computation cost. Convergence is fast when the objective function landscape is well-behaved and the starting point is near the optimum.

3. Where Gradient-Based Methods Break Down

Local optima trapping is the primary failure mode. Gradient-based methods descend toward the nearest local minimum from the starting point. On multimodal problems the majority of real engineering design problems the result depends entirely on initialization and can be far from the global optimum. The method has no mechanism to escape a local valley once it has descended into one.

They also require differentiable objective functions. In simulation-driven engineering where each function evaluation involves a CFD or FEA run, gradient computation is expensive, sometimes unavailable, and sensitive to numerical noise. For topology-level design decisions and multi-objective trade-off problems, gradient-based methods work on the wrong abstraction entirely.

For smooth, well-structured design problems with reliable gradient information, these methods remain the right choice. As problem complexity grows, their limitations become progressively more constraining.

Metaheuristic Optimization Methods for Complex Engineering Problems

Metaheuristic methods were developed specifically for the problems gradient-based approaches cannot handle multimodal, non-differentiable, black-box objective functions with complex constraint landscapes where derivative information is either unavailable or unreliable.

1. How Metaheuristic Methods Work

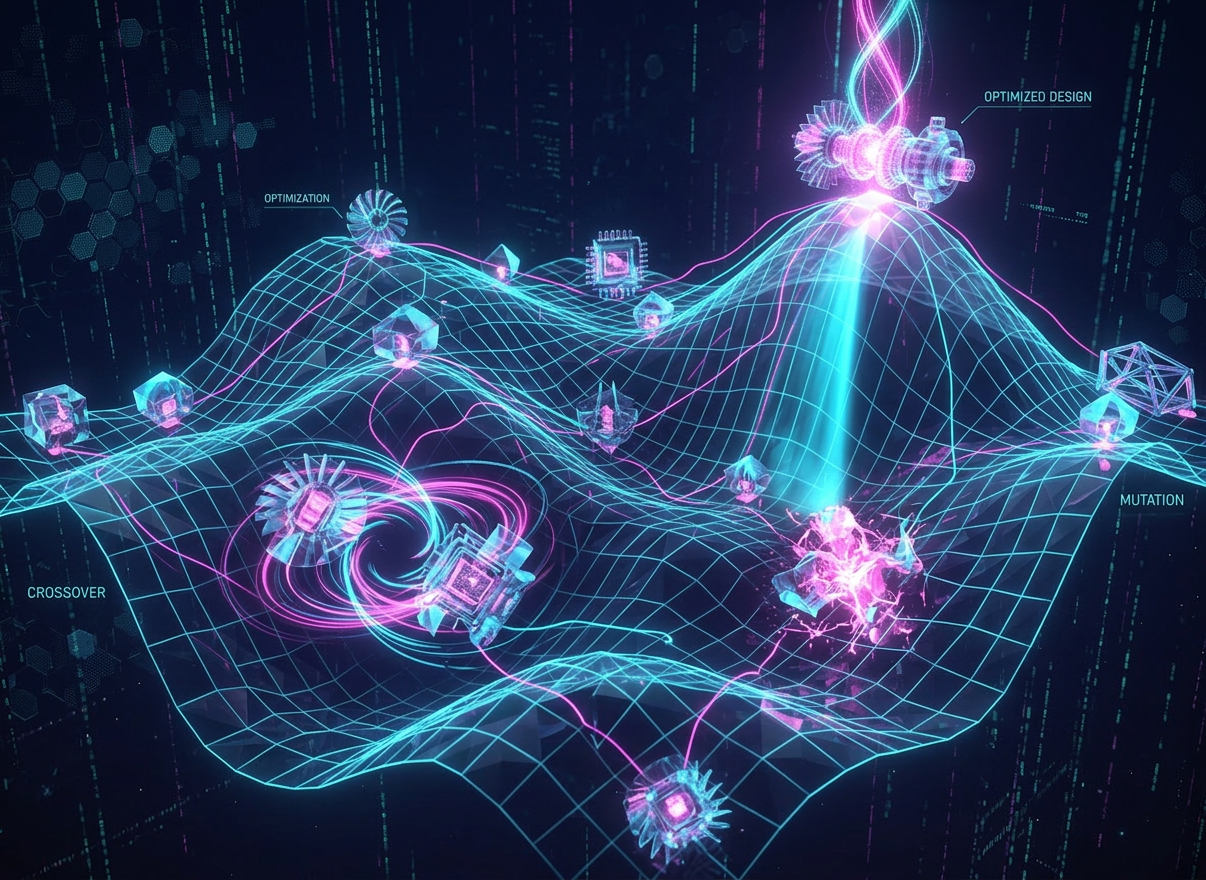

Rather than following a gradient, metaheuristics maintain and evolve a population of candidate solutions. Genetic Algorithms (GA), Particle Swarm Optimization (PSO), Differential Evolution (DE), and Simulated Annealing are the most established.

They use selection, mutation, crossover, or swarm dynamics to explore the solution space without requiring derivative information making them applicable to any problem where the objective function can be evaluated, regardless of its mathematical structure.

2. Genetic Algorithms and Evolutionary Methods in Engineering Design

GA-based methods are particularly suited to topology optimization problems, combinatorial design spaces, and multi-objective engineering problems where the objective function is non-convex.

They explore multiple regions of the design space simultaneously, finding globally better solutions than gradient methods on multimodal problems. In structural design and design optimization in engineering, GA-based approaches have demonstrated consistent ability to find topologies that gradient-based SIMP initialization misses.

3. Particle Swarm and Differential Evolution

PSO and DE are population-based methods that use swarm dynamics or vector difference operators to guide search. Both are derivative-free, parameter-adaptive in modern variants, and effective on continuous, non-linear engineering design problems. Modern self-adaptive DE variants including JADE adjust mutation and crossover parameters automatically based on successful past iterations, significantly improving convergence on complex engineering benchmarks.

4. The Function Evaluation Cost Problem

The critical limitation in engineering contexts is function evaluation cost. Metaheuristics require many objective function evaluations, often hundreds to thousands, to converge on a good solution. When each evaluation involves a CFD simulation that takes hours or a FEA solve that requires significant computation, population-based methods become computationally prohibitive at scale.

Surrogate-assisted metaheuristics partially address this by substituting cheap approximation models for expensive simulations, but introduce their own accuracy trade-offs and additional workflow complexity.

Topology Optimization: The Most Computationally Demanding Engineering Design Method

Topology optimization determines the optimal material distribution within a design domain answering not just how big or what shape, but where material should exist at all. It is the most computationally demanding form of structural engineering optimization and the problem class where method selection has the largest impact on both solution quality and compute cost.

1. The Classical SIMP Method

The Solid Isotropic Material with Penalization (SIMP) method is the standard topology optimization approach. It uses gradient-based sensitivity analysis to iteratively redistribute material density across a design domain. SIMP is computationally efficient but prone to local optima; the final design depends on initialization and mesh resolution, and can miss globally superior topologies that a different starting configuration would reveal.

2. Hybrid Topology Optimization Approaches

Hybrid approaches combining GA with topology optimization explore the design space more broadly, reducing local optima sensitivity. Research benchmarks on Kriging-assisted GA for CFD topology problems demonstrate that hybrid methods find superior designs compared to gradient-only SIMP at the cost of higher function evaluation counts, with each evaluation involving a full CFD or FEA solve.

3. The Core Scalability Limitation

Topology optimization involves very large design spaces by construction. High degrees of freedom for shape and material distribution make exhaustive population-based search impractical, and gradient-based methods insufficiently global. As design domains grow in complexity three-dimensional aerodynamic structures, composite material layouts, multi-physics thermal-structural problems both classical approaches reach limits that require problem simplification to manage computationally. This is the design class where quantum optimization algorithms provide the clearest advantage.

Multi-Objective and Constrained Engineering Optimization

Most real engineering design problems are not single-objective. Structural weight, aerodynamic performance, thermal efficiency, manufacturability, and cost interact in ways that require simultaneous optimization across competing objectives and most come with hard constraints on stress limits, dimensional bounds, weight budgets, and performance thresholds.

Multi-objective evolutionary algorithms (MOEAs) NSGA-II being the most widely used generate Pareto fronts that map trade-offs between objectives. This gives engineers a set of non-dominated solutions rather than a single output, enabling informed design trade-off decisions rather than forcing a premature single-objective reduction.

Constrained optimization adds further complexity. Inequality and equality constraints must all be satisfied simultaneously while optimizing the objective. Classical penalty methods handle constraints in gradient-based frameworks. Constraint-handling in metaheuristics requires specialized operator design, and constraint satisfaction becomes increasingly difficult as constraint count grows.

As the number of objectives and constraints grows, solution spaces become exponentially harder to explore. This is the problem class where evaluation cost of population-based methods becomes most prohibitive and where quantum inspired optimization for aerospace and defense offers the clearest computational advantage exploring competing constraint spaces in parallel rather than sequentially.

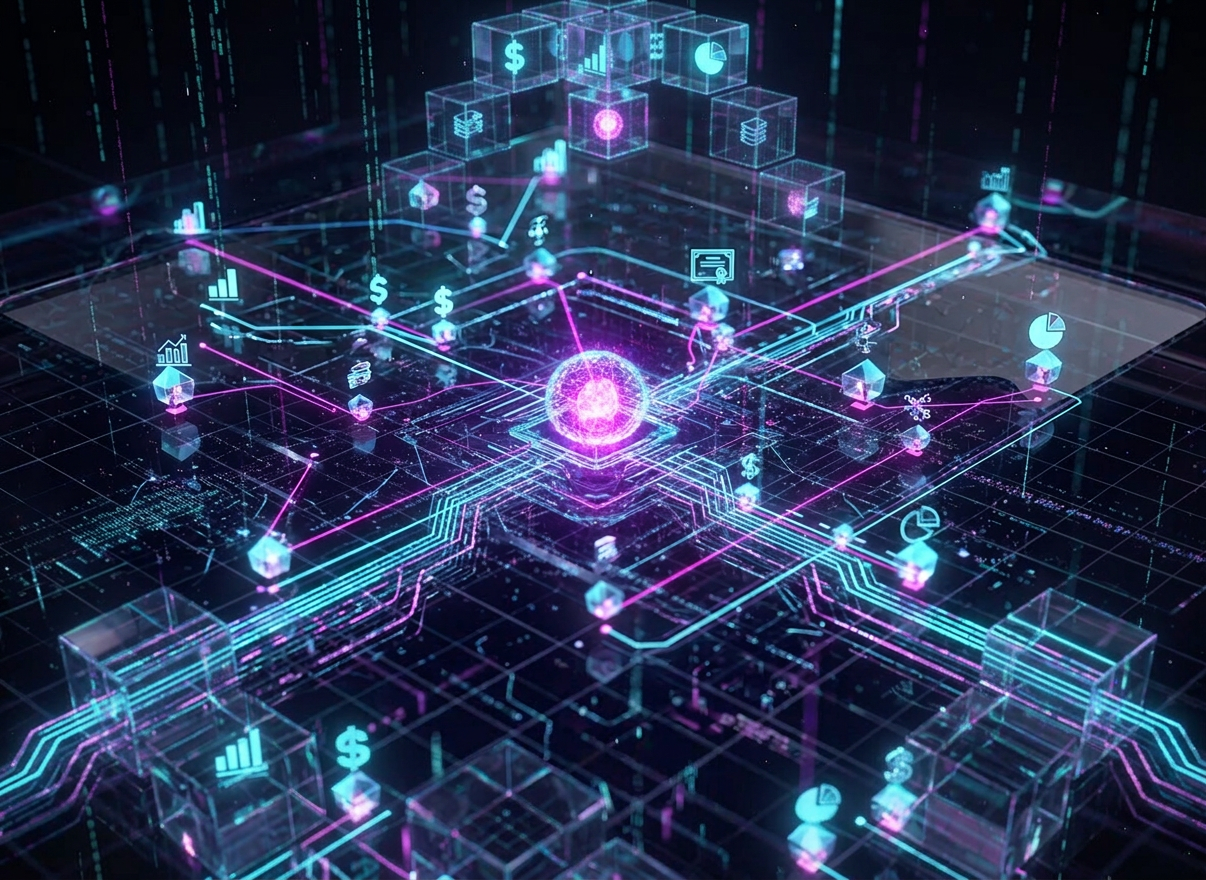

Hybrid Engineering Optimization Methods: Combining Global Search with Local Precision

The limitations of both gradient-based and metaheuristic methods in isolation have driven substantial research into hybrid approaches that combine global exploration with precise local refinement. This is the current practical frontier for simulation-heavy engineering optimization.

The JADE-GBO hybrid is a well-benchmarked example. It uses adaptive differential evolution for global exploration of promising regions, then applies gradient-based optimization for precise local refinement of the best candidates. On benchmarks covering truss topology optimization, fluid dynamic design, and control parameter tuning, the hybrid consistently outperforms either method alone both on final solution quality and convergence rate.

Surrogate-assisted hybrids follow a similar logic. A cheap surrogate model Kriging or neural network approximates the expensive simulation objective, allowing metaheuristic search to run affordably, with periodic high-fidelity evaluations to update the surrogate and maintain accuracy. This reduces function evaluation counts significantly compared to pure metaheuristic search, at the cost of surrogate modeling overhead.

Hybrid methods represent the current practical frontier for simulation-heavy engineering optimization problems. Quantum-inspired optimization for engineers extends this further, replacing the metaheuristic component with probabilistic parallel search that achieves significantly better solution quality with fewer expensive function evaluations than either GA or surrogate-assisted approaches at scale.

How to Choose the Right Engineering Optimization Method for Your Problem

Method selection depends on four factors: objective function properties, design space dimensionality, evaluation cost per iteration, and whether the problem is single or multi-objective. The framework below maps common engineering problem types to the appropriate method family.

The table above is a starting point. Most real engineering problems sit across multiple categories which is why the direction of the field is toward hybrid and quantum-inspired approaches that handle the full problem class without sacrificing either global search quality or computational efficiency.

Quantum-Inspired Optimization Methods in Engineering: Where They Fit and What They Solve

Quantum-inspired optimization is not a replacement for gradient-based or metaheuristic methods. It is a structurally different approach to the problem class where both families reach their practical limits and understanding where that is requires understanding precisely why both classical families fail at scale.

Gradient-based methods fail when the problem is multimodal. Metaheuristics fail when function evaluation is expensive. Quantum-inspired methods address both constraints simultaneously; they explore solution spaces probabilistically and in parallel, finding globally better solutions with significantly fewer expensive simulation evaluations than population-based methods require.

- Parallel probabilistic search evaluates multiple solution states simultaneously not sequentially reducing iteration counts in simulation-heavy workflows

- Quantum tunneling principles allow the algorithm to escape local optima that gradient-based methods and classical metaheuristics cannot move beyond

- Reduces the number of expensive CFD, FEA, or trajectory simulation evaluations required to reach near-optimal solutions

- Scales to high-dimensional combinatorial problems structural topology, multi-satellite coordination, multi-objective aerodynamic design where population-based methods become computationally prohibitive

BQP's QIO solver runs on existing classical HPC infrastructure with no quantum hardware required. It delivered an additional 6% weight reduction over traditional solvers on structural optimization problems with specific strength and stiffness requirements, a result that directly reflects the difference between local and global search at the design level. On aerospace optimization techniques, BQP's platform demonstrated optimization of a 1,000-satellite constellation using QIO, the scale at which all classical methods require significant problem simplification to complete within practical runtimes.

BQP integrates with STK, GMAT, MES, and ERP systems, enabling deployment within existing engineering workflows. Most teams validate results within 4–8 weeks of initial pilot engagement.

Engineering Optimization in Aerospace and Defense: The High-Stakes Application Class

Aerospace and defense represent the most demanding application class for engineering optimization combining high-dimensional design spaces, expensive simulation evaluations, multi-objective trade-offs, and hard real-time requirements simultaneously. Every method limitation discussed in this guide is present at once.

Classical gradient-based methods have been embedded in aerospace design workflows for decades, adjoining CFD for aerodynamic shape optimization being the primary example. They work well for shape refinement on problems where the design space is well-defined and the objective function is smooth. They are not suited for topology-level design decisions, multi-mission trade-off problems, or the combinatorial coordination problems that define modern aerospace operations.

Quantum optimization algorithms address the problem class gradient-based tools cannot reach. A defense contractor applying quantum-inspired optimization reduced mission planning time for a 12-drone reconnaissance mission from 8 hours to 22 minutes while simultaneously exploring 10,000x more candidate routes. The US Air Force Mobility Command achieved 18% fuel savings and 22% faster mission planning across 47 bases through quantum-inspired routing deployment.

For structural and design optimization, BQP's platform combining QIO with Physics-Informed Neural Networks embeds physical laws directly into the optimization process, reducing dependency on exhaustive CFD iteration cycles while maintaining full-physics accuracy. This is the engineering optimization architecture that aerospace and defense programs are adopting as design complexity outpaces what classical methods can handle within program timelines.

Matching Engineering Optimization Methods to Problem Complexity

Engineering optimization methods are not interchangeable. Gradient-based approaches are precise and fast on the right problem class. Metaheuristics handle complexity at the cost of evaluation count. Hybrid approaches extend the practical frontier further. Quantum-inspired methods extend it further, still addressing the problem class where high dimensionality, expensive evaluations, and multimodal objective functions occur simultaneously.

The direction of the field is clear. As design problems grow in dimensionality, evaluation cost, and constraint complexity, the methods that explore solution spaces globally without requiring massive function evaluation counts become necessary, not optional.

That is the problem class quantum inspired optimization is built for and where BQP is delivering measurable engineering results across aerospace, defense, and advanced manufacturing today.

Ready to test quantum-inspired optimization on your engineering problem? Start your free trial and benchmark BQP against your current solver in 4–8 weeks.

Frequently Asked Questions About Engineering Optimization Methods

What are the main engineering optimization methods?

The main families are gradient-based methods (SQP, adjoint, conjugate gradient), metaheuristic methods (GA, PSO, differential evolution), topology optimization, multi-objective evolutionary algorithms, and hybrid approaches combining multiple families. Each is suited to specific problem structures the right method depends on objective function properties, dimensionality, evaluation cost, and whether global or local search is the priority.

When should you use gradient-based vs metaheuristic optimization in engineering?

Gradient-based methods are best for smooth, convex, differentiable problems with reliable derivative information aerodynamic shape refinement being the classic example. Metaheuristics are better when the objective function is non-differentiable, multimodal, or black-box. However, metaheuristics require many function evaluations, making them expensive when each evaluation involves a CFD or FEA simulation. When both conditions apply simultaneously, quantum-inspired methods are the practical choice.

What is topology optimization in engineering design?

Topology optimization determines the optimal material distribution within a design domain deciding where material should exist, not just how it should be shaped. The classical SIMP method uses gradient-based sensitivity analysis and is efficient but prone to local optima. Hybrid approaches combining genetic algorithms with topology methods improve global search quality at higher computational cost.

How do quantum-inspired methods improve engineering optimization?

Quantum-inspired optimization uses probabilistic, parallel search derived from quantum computing principles, running on classical HPC hardware. It escapes local optima and requires significantly fewer expensive simulation evaluations than population-based metaheuristics making it particularly effective for high-dimensional, simulation-heavy engineering problems where both classical method families reach their limits simultaneously.

How do you select the right optimization method for a complex engineering problem?

Start with objective function properties smooth and differentiable favors gradient-based; multimodal or black-box favors metaheuristic or quantum-inspired. Evaluation cost is the second key factor. When each function evaluation involves CFD or FEA, population-based methods become prohibitive and quantum-inspired approaches which converge with fewer evaluations are the practical choice for high-dimensional, multi-objective engineering problems.

.png)

.png)

.svg)

.svg)

.svg)

.svg)