Overview

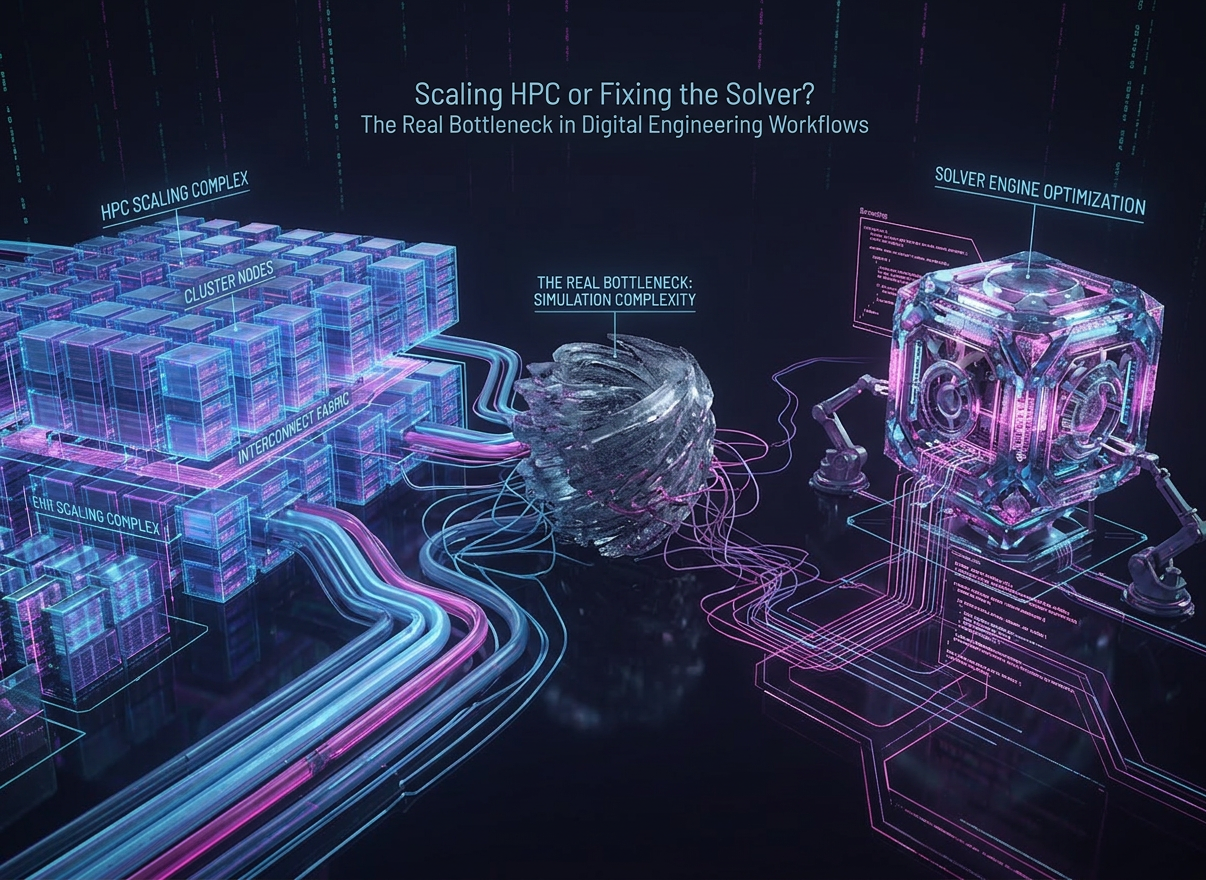

High-fidelity simulation and large-scale optimization have become central to modern digital engineering workflows. Advances in GPU computing, distributed HPC, and cloud infrastructure have significantly increased computational capacity.

However, in simulation-driven optimization pipelines—particularly in CFD, optimization, and system-level design—performance gains are no longer proportional to increases in compute resources.

To examine this gap, BQP spoke with:

- Dr. Sai, Optimization Scientist (Lead), BosonQ Psi

- Dr. Ferdin, HPC Systems Lead, BosonQ Psi

Evolution of Digital Engineering & Simulation-Driven Workflows

Q: How have digital engineering workflows evolved from a computational perspective?

Dr. Sai:

- Shift from single runs to iterative optimization loops

- Simulation now embedded inside optimization

- Total cost driven by repeated evaluations

If you look at how digital engineering workflows have evolved, the biggest shift is that we’re no longer running simulations as isolated tasks. Today, simulation is embedded directly inside optimization loops.

So instead of solving a model once, teams are solving it repeatedly—sometimes thousands of times—each with different parameters. That creates a nested structure where the outer loop explores design variations, and the inner loop runs the actual physics.

At that point, performance is no longer just about how fast a single simulation runs. It becomes about how efficiently the optimizer navigates the design space and how many evaluations are required to converge.

Q: What role does HPC play in this structure?

Dr. Ferdin:

- Simulation layer is already highly optimized

- HPC scales well for single design evaluations

- Optimization layer introduces scaling complexity

For roughly three decades, efforts have been directed at accelerating simulations, which has led to highly performant code that leverage domain-decomposition strategies and MPI to scale across multi-core CPUs and multi-node clusters. As a result, we can obtain high-fidelity solutions to PDEs, Multiphysics models, and CFD solvers for a given design point within a reasonable timeframe.

However, this acceleration does not translate well to the optimization-simulation workflow described by Dr. Sai.

The outer loop or optimizer demands many design points, and that is where HPC architecture plays a crucial role. With the right strategy, the optimization layer highly amenable to parallelism. For instance, consider the island model, where multiple instances of the same optimization problem are simultaneously run on sub-clusters. Each sub-cluster explores the design space independently and can inspire their sister sub-clusters through periodic exchange of their best solution; a strategy known as migration in the island-model parlance.

Challenges in Scaling HPC for Optimization Workloads

Q: Why do HPC systems struggle with large-scale optimization problems?

Dr. Ferdin:

- Optimization is stochastic and sequentially dependent

- Load balancing becomes inefficient at scale

- Redundant evaluations reduce effective output

Assuming that the simulation layer is utilizing the most advanced, performant solver available, the critical pain point is the orchestration and scheduling. Optimization is an ordered stochastic process, which means what is simulated in the present iteration is largely motivated by its preceding iteration. So, convergence characteristics, and consequently, computational times can vary between iterations. This makes any forecasting of the total optimization time difficult.

In evolutionary algorithms, where many candidate solutions are evaluated simultaneously across cores, load balancing becomes a pyrrhic task. If the load balancer is inefficient, it is going to result in wasted machine time, whereas if it is made efficient, the load balancer itself will consume computational resource.

Another challenge in large-scale optimization problems is the sheer number of design points that need to be evaluated. The issue is not so much in the compute load, but rather that most of these design points may not contribute meaningfully towards directing the optimization process. In island-models this can be reduced by fostering communication and information sharing between sub-clusters, but this comes at the price of synchronization and communication overheads.

Q: How does this differ from scaling pure simulation workloads?

Dr. Ferdin:

- Simulation workloads are deterministic

- Resource needs are predictable

- Optimization introduces unpredictability

Pure simulation workloads are well defined. For instance, a CFD problem has its initial and boundary conditions specified, the mesh is fixed (at least for static mesh type of simulations), and the flow physics regime is generally known through dimensionless numbers (like Reynolds number, which identifies the onset of turbulence). This makes prediction of the computational resources deterministic and predictable.

Optimization workloads are ordered chaos. There is no certainty that can be relied on to predict convergence characteristics. The result is that even if the computational load of the underlying solver is known, the needs of the overall process are unknowable. This makes the optimization-simulation workflow sub-linear.

Observed Inefficiencies in HPC-Driven Workflows

Q: What inefficiencies are most prominent in real-world systems?

Dr. Ferdin:

- Legacy solvers underutilize modern hardware

- GPU optimization is non-trivial and architecture-specific

- Parallel strategies introduce redundancy and overhead

Most of our discussion have assumed that the underlying solvers are the most performant. However, most of these codes have missed the memo on many-core accelerators, such as GPUs. Indeed even today, the number of CFD solvers that utilize GPUs are countable. The shift is not trivial; many of these legacy codes were not designed to scale to many-core architectures and the divide-and-conquer strategy that served them well on CPUs, result in severe underutilization of GPUs.

There is tangible inertia in porting these massive codebases to modern GPUs. Even if the effort were to be undertaken, achieving peak performance on a GPU requires in-depth knowledge on the specific GPU. The nuances and minutia that can be employed to run a code efficiently on a NVIDIA V100, will have to be re-engineered for an NVIDIA A100, and completely reimagined for an AMD MI300X.

The optimization layer is a different beast, and although in principle, it is highly amenable to parallelism; it too is riddled with pitfalls. For instance, consider the island model, where multiple instances of the same optimization problem are simultaneously run on sub-clusters is of great utility. Each sub-cluster explores the design space independently and can inspire their sister sub-clusters through periodic exchange of their best solution; a strategy known as migration in the island-model parlance.

The main problem is that of redundant sampling; design points in similar regions of the solution landscape get evaluated multiple times by the sub-clusters. The second is the weak correlation between the work done by the sub-clusters. While both are mitigated by the migration, frequent use of this mechanic results in data-movement bottlenecks such as bandwidth saturation, and synchronization overheads.

Q: How do these inefficiencies affect system-level performance?

Dr. Ferdin:

- High utilization can mask inefficiency

- Compute may be spent on low-value regions

- Algorithm quality determines productivity

The key metric for HPC efficiency is utilization. Sadly, for optimization workloads, this metric can be a trap. High utilization does not translate to productivity. An optimizer could be evaluating many design points around a local minima repeatedly, which shows high throughput, but the optimization itself is inching its way out of the local minima. This shifts the onus of performing useful computations to the algorithm; it is from there that the intelligence and logic to avoid evaluating low-value design points must arise.

Limitations of Classical Optimization in Engineering Systems

Q: What are the core limitations of classical optimization methods in these contexts?

Dr. Sai:

- Assumptions break in real engineering systems

- Landscapes are noisy and non-convex

- Leads to unstable convergence

Most classical optimization methods were built on assumptions that don’t really hold in real-world engineering problems.

In practice, you’re dealing with noisy gradients, highly non-convex objective functions, and complex constraints that are difficult to model cleanly. So methods that rely on smoothness or gradient information tend to struggle.

What you typically see is premature convergence, sensitivity to where you start, and a lot of wasted computation exploring regions that don’t meaningfully improve the solution.

Q: How does dimensionality affect solver behavior?

Dr. Sai:

- Search space expands exponentially

- Local minima become dense

- Sampling becomes increasingly inefficient

As dimensionality increases, the problem becomes fundamentally harder.

The search space grows exponentially, and at the same time, the number of local minima increases. So the optimizer has a much harder time finding good regions, let alone the global optimum.

In practical terms, that means you need far more evaluations just to maintain coverage. And at some point, the number of required evaluations grows faster than what additional compute can realistically handle.

Mismatch Between Algorithms and HPC Architectures

Q: Is there a structural mismatch between optimization algorithms and HPC systems?

Dr. Sai:

- Algorithms are inherently sequential

- Limited parallel awareness

- Leads to underutilized HPC

Yes, there is a clear mismatch.

Most optimization algorithms weren’t designed with modern parallel architectures in mind. They operate sequentially, making decisions step by step, often based on local information.

So even if you have access to distributed systems or GPUs, the algorithm itself limits how much of that compute you can actually use. That’s where a lot of inefficiency comes from.

Reframing the Problem: Search Efficiency

Q: How should teams rethink optimization in this context?

Dr. Sai:

- Shift from runtime to evaluation quality

- Focus on reducing wasted computation

- Treat optimization as a search problem

The way to think about this has to change.

Instead of focusing only on how fast each simulation runs, the focus should be on how many of those simulations are actually useful.

If your optimizer is evaluating points that don’t provide meaningful information, then you’re just consuming compute without making progress. So the goal should be to minimize ineffective evaluations.

That’s really what defines efficiency at scale.

Q: What defines search efficiency in large-scale systems?

Dr. Sai:

- Broad and meaningful exploration

- Ability to escape local minima

- High information gain per evaluation

Search efficiency is really about how intelligently you explore the design space.

You want good coverage, the ability to move out of local minima, and a diverse set of candidate solutions. Most importantly, each evaluation should add meaningful information.

It’s not about evaluating more points—it’s about evaluating better ones.

Impact on Engineering Workflows

Q: What changes for simulation and optimization teams?

Dr. Sai:

- Fewer iterations required

- More stable convergence

- Reduced manual tuning

For engineering teams, the impact is quite direct.

You end up needing fewer iterations to reach a solution, and the convergence behavior becomes much more stable. That reduces the need for manual tuning and repeated trial-and-error.

Overall, it makes the workflow more predictable and far more efficient.

Conclusion

The bottleneck in modern digital engineering workflows is no longer compute capacity—it is solver efficiency and search intelligence.

Scaling HPC without improving optimization strategies leads to diminishing returns.

The next phase of progress will come from:

- Better algorithms

- HPC-aware optimization design

- Maximizing value per computation

The constraint is no longer compute. It is how effectively it is used.

.png)

.png)

.jpg)

.svg)

.svg)

.svg)

.svg)